As you learned in the first part of our RoboSteve series, we are building a Machine Learning and Machine Vision platform inspired by our office dog Steve. There’s only one thing biological Steve loves more than food, and that’s playing with his girlfriend Miso.

In order to emulate this behaviour, RoboSteve will need a way to identify dogs, food, and other objects of interest and a deep neural net should do the trick. This post is going to give a high level overview architecture design and implementation of Tensor RT on the Nvidia TX1.

Meet Miso, a canine companion of RoboSteve

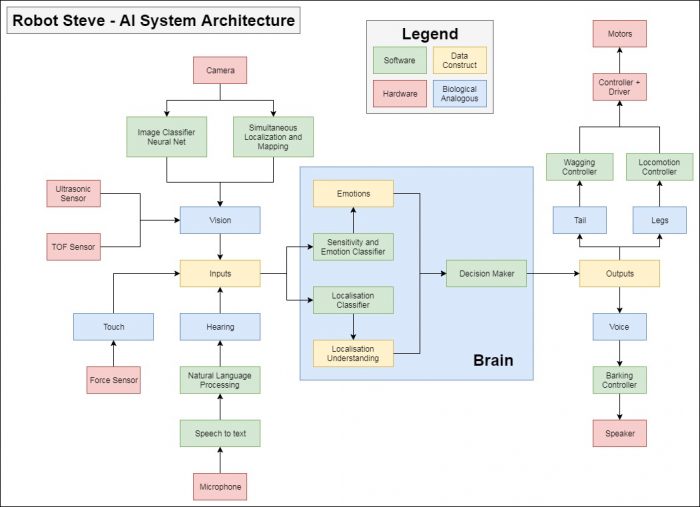

A while back, I designed a Systems Overview Diagram of how I would form Steve’s AI.

RoboSteve’s AI System Architecture

The inputs–how the environment stimulates Robosteve–are fed into the “brain”, and the “brain” makes decisions based on those inputs and generates some sort of an output – how Robosteve is going to stimulate the environment. There is no need to insert feedback from the outputs to the inputs in this block diagram, as the environment inherently is the feedback.

I could write pages about other elements of the systems, everything from natural language processing to DC motor control, but as I said above, this post is on the image classifier neural net.

I spent some time looking around on how to implement this quickly, and I hit the hacker goldmine: someone has already done 90% of the work, and it doesn’t really surprise me that Nvidia has put together a project that truly shows off their hardware.

Cloning the repo onto the TX1 and compiling was a breeze, so I decided to go ahead with the onboard camera just to keep things simple at the start. There were some initial problems getting the camera to work, but digging around it wasn’t anything a couple lines of code couldn’t fix. The project was extremely well put together, making modifications in the source and recompiling painless.

The net was pre-trained, and before jumping in to re-train, I tried it out with the stock trained net. As this was just an afternoon project I didn’t do much research ahead of time and didn’t know what to expect, but the outcome was truly awesome. Not only could it classify “dog” from “cat”, it can classify specific breeds of dogs and cats, and more specifically different breeds of corgis! This is going to be more than sufficient for RoboSteve…

Testing some images on my phone

Classified the office oscilloscope with a 97% + confidence

Classified an SLR with 75%+ confidence

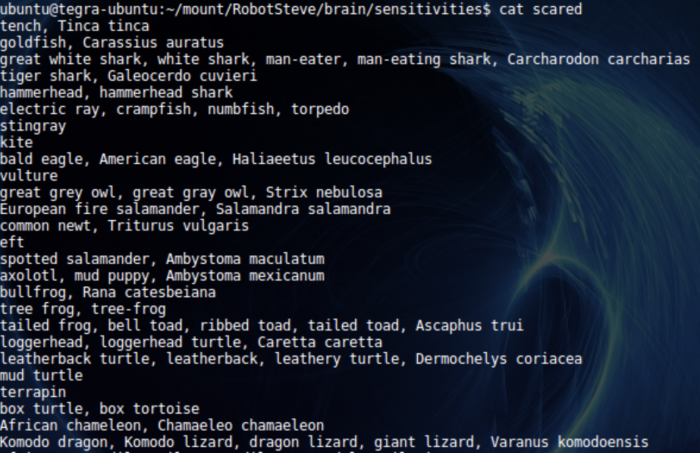

There were exactly one thousand different “things” that this particular net could classify, after this I built a small list of possible emotions that Robosteve could have.

- Angry

- Hungry

- Playful

- Sad

- Scared

It’s simply a JSON file with finite values associated with each emotion, in the block diagram above it’s listed at the “Emotions” data construct. After this step, I built another sector which holds possible classified objects and associates them with emotions, and this is also just a file.

Looking into the things that make Robosteve scared

Being a “unix-ey” guy, I just took all realtime classified objects that pass a certain threshold of confidence and put them in a file. Once one whips up a quick Python script comparing the file contents, it’s now possible to build out RoboSteve’s current emotions and put them in yet another file. This can be accessed from any other application and serves to make the design more modular – allowing access from anywhere in the application level.

It begs the question: How long do dog emotions last? Minutes? Hours? Days? I started with a zeroing time of 10 minutes, meaning every 10 minutes RoboSteve goes back to his “neutral” emotion.

Another script changes RoboSteve’s face (below) depending on his emotions. There’s also a speaker on the screen, allowing RoboSteve to bark, growl and (hopefully not) cry.

Happy RoboSteve

I’m envisioning the next step to be floating dog head rendered in OpenGL, reflecting all the emotions in a fluid, more realistic fashion.

At the end of the day – this was a really cool exercise understanding some decently high level machine vision implementations and applying them to the office robot dog.

Read part three of the RoboSteve series.